本文最后更新于:2024年1月27日 中午

介绍

虚拟机的安装

供测试用

准备工作

镜像文件 Ubuntu Server 20.04 LTS

vMWare WorkStation Pro 17

安装过程

CPU内核数 建议 插槽1 内核2

内存大小 建议 2GB

硬盘大小 建议20GB 不少于18GB

同配置虚拟机创建三份

或者等k8s安装完(没安装主节点)后克隆然后再改IP 和改Host

Name

开启虚拟机SSH ,方便后续用Xshell 连接

Kerbernetes配置

1.19.4基本配置

源配置

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 # 备份source.list 源 # 删除旧源 # 添加阿里源 # ALi Source # 更新缓存和升级

关闭防火墙

关闭swap

1 2 3 4 5 # 关闭swap # 永久关闭swap分区

禁止selinux

1 2 3 4 5 6 7 8 9 10 11 禁止selinux# 安装操控selinux的命令 # 禁⽌selinux # 重启操作系统 # 重启后 查看selinux状态 # Disabled 已关闭

网络配置

初始化

1 2 3 4 5 6 7 8 9 # 创建k8s.conf # 添加以下内容 # 使修改生效

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 # 查看GateWay 和 addresses # nmcli dev show ens33 #

1 2 3 4 5 6 7 8 9 10 11 12 13 # 修改host文件 # 添加你的三台虚拟机的IP路由 # 三台机器IP都改好后 # 在每个机器的 # Host文件里把互相的静态IP都填上 # 192.168.231.xxx node01 # 192.168.231.xxx node02 # 重启 # 应用网络

安装Docker

卸载旧版本

1 2 3 4 5 6 7 # 御载旧版本docker # 清空旧版docker占用的内存 # 更新系统源

配置安装环境

1 2

添加阿里云密钥

1 curl -fsSL http://mirrors.aliyun.com/docker-ce/linux/ubuntu/gpg | sudo apt-key add -

添加阿里云镜像源

1 2 3 sudo add-apt-repository "deb [arch=amd64] http://mirrors.aliyun.com/docker-ce/linux/ubuntu $(lsb_release -cs) stable"# 更新

安装具体版本

1 2 3 5 :20.10 .5 ~3 -0 ~ubuntu-focal

重启并查看安装版本

1 2 3 4 5 6 # 重启 # 或者 # 查看Docker版本

部署阿里云镜像加速

登录 阿里云Docker镜像加速

执行控制台的命令

可以在daemon.json 中加上

1 2 3 4 5 6 7 8 # 官网文件推荐添加提高稳定性"exec-opts" : [ "native.cgroupdriver=systemd" ] 300 m,意味着一个容器日志大小上限是300 M, 3 ,意味着一个容器有三个日志,分别是id+.json、id+1. json、id+2. json"log-driver" : "json-file" , "log-opts" : { "max-size" : "300m" , "max-file" : "3" }

重启Docker

1 2 sudo systemctl daemon-reload

添加用户组

1 2 # 添加用户组,防止每次运行docker命令需要sudo

附录:一些Docker命令

1 2 3 4 5 6 7 8 9 10 11 12 13 14 # 查看已经停止的容器 # 删除所有已经停止的容器 # 生成镜像docker build -t tilemap_pkg_services . # 启动镜像为容器 # 进入容器的系统: # 然后用下面的命令进入容器,就可以使用bash命令浏览容器里的文件:

安装K8S

修改sources.list

1 2 3 4 sudo vim /etc/apt/sources.list# 在/etc/apt/sources.list最后增加

更新sources

1 2 3 4 5 6 7 sudo apt update# 如果出错(No key) # 执行

安装K8S

1 2 # 实验室 默认版本

查看是否安装成功

设置K8S开机自启

1 sudo systemctl enable kubelet && systemctl start kubelet

三台机器都要配置

下面是在配置好一台机器后快速配置三台的方案

配置一台虚拟机,克隆三份,改IP和HOST NAME

1 sudo hostnamectl set-hostname server.local

再配置两个虚拟机,再手动配置(不推荐)

配置三台机器时候上述流程需要注意的地方

每台机器的静态IP 每台机器的host文件

当你三台机器都安装好K8S后

执行以下步骤

主节点配置

在Master虚拟机完成

1 2 3 # apiserver-advertise-address=192.168.xxx.xxx 为你Master的IP地址 (host和静态IP里写过)

保存Join代码(重要)

1 2 kubeadm join 192.168.231.128:6443 --token acqsqj.q62qu2owl78m7nem \

主节点拷贝配置文件

1 2 3 mkdir -p $HOME/.kube

部署网络插件

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 # 查看节点状态 # master NotReady # 安装网络插件 # 对文件进行编辑: # 修改typha_service_name的值为"calico-typha" # 修改CALICO_IPV4POOL_IPIP的值为Never # 取消CALICO_IPV4POOL_CIDR的注释,并将value修改为"10.244.0.0/16" # 应用更改 # 查看Running状态 # 查看节点状态 # master Ready

网络插件Calico与K8S版本关系

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 Kubernetes 版本 Calico 版本 Calico 文档

两台子节点配置

在Node01、Node02虚拟机完成

加入主节点

这是你之前主节点K8S init后自动生成的Join代码

1 2 sudo kubeadm join 192.168.231.128:6443 --token acqsqj.q62qu2owl78m7nem \

拷贝admin.conf

用到Xshell和XFTP进行不同机器间文件的拷贝

拷贝master的admin.conf拷贝到子节点,设置环境变量

1 2 3 4 5 6 7 8 9 10 # 主节点Xshell中 # 给 /etc/kubernetes文件夹权限 # 在XFTP中将主节点/etc/kubernetes/admin.conf拷贝到桌面 # 子节点Xhell中 # 给 /etc/kubernetes文件夹权限 # 在XFTP中将桌面中admin.conf拷贝到子节点/etc/kubernetes文件夹中

1 2 3 mkdir -p $HOME/.kube

Kuboard 安装

参考:安装

Kuboard v3 - kubernetes | Kuboard

1 kubectl apply -f https://addons.kuboard.cn/kuboard/kuboard-v3.yaml

1 2 3 4 5 6 7 NAME READY STATUS RESTARTS AGE

访问Kuborad页面

Kubernetes 操作

删除被驱除的节点 Evicted

kubectl get pods (-n kuboard) | grep Evicted | awk '{print $1}' |

xargs kubectl delete pod

创建你的第一个镜像

在K8S上跑一个helloworld -

简书 (jianshu.com)

1 2 3 4 5 6 7 8 kubectl get namespaces #查看所有命名空间

使用NodePort服务

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 apiVersion: apps/v1 kind: Deployment metadata: name: hello-node namespace: k8s-test01 labels: app: hello-node spec: replicas: 3 selector: matchLabels: app: hello-node template: metadata: labels: app: hello-node spec: containers: - name: hello-node-container image: hello-node:v1 ports: - containerPort: 8080

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 apiVersion: v1 kind: Service metadata: name: web namespace: k8s-test01 labels: app: web spec: type: NodePort ports: - port: 8099 protocol: TCP targetPort: 8080 nodePort: 30009 selector: app: hello-node

企业微信截图_16967410295609

创建私人镜像仓库

作用

避免在不同节点之间反复docker build image

k8s集群部署harbor镜像仓库_k8s配置镜像仓库_机灵的小小子的博客-CSDN博客

部署完之后,在不同节点机器上分别 docker login harborIP

build,push,pull Image

1 2 3 4 5 6 7 8

更新镜像

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 apiVersion: apps/v1

POD pull失败

[k8s]

kubernetes从harbor拉取镜像没有权限解决方法 unauthorized_error response

from daemon: unauthorized: unauthor-CSDN博客

1 2 3 4 5 6 7 # 实现Pod 的 docker login # regcred 是 secret名称,可以自定义

更新yaml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 apiVersion: apps/v1

应用

1 kubectl apply -f deployment.yaml

节点被驱除

[kubelet 压力驱逐 - The node had condition:DiskPressure]_the

node had condition: [memorypressure].-CSDN博客

集群升级

注意事项

1、每次升级一个版本

19.x > 20.x > 21.x >22.x ... 29.x

2、24.x后k8s使用containerd作为容器,不再使用docker需要切换容器服务

3、查看所有版本命令

1 2 3 apt-cache madison kubeadm

19.x > ... 23.x 通用

主节点升级

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 # 以1.21.17 > 1.22.17为例! # 保护主节点 (master为当前主节点名称) # 安装新版本kubeadm # 查看可用升级计划 # 使用升级命令 # 如果控制台提示stable版本的当前kubeadm版本不同,apply版本以kubeadm为准 # 升级kubelet和kubectl # 重启服务 # 取消保护主节点 # 查看节点情况和版本

子节点升级

在主节点升级完成后

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 # 安装新版本kubeadm # 保护子节点 # 升级 node # 升级kubelet和kubectl # 重启服务 # 取消保护

查看升级情况

1 2 # 查看节点情况和版本

23.x > ... 29.x 通用

容器服务迁移

24.x版本及以后,容器服务使用containerd

从23.x版本升级到24.x版本,容器服务需要由docker迁移到containerd中!!!

只在这两个版本之前需要迁移,24.x后续版本使用containerd后不需再迁移!

24.x版本之后的升级忽略此步骤

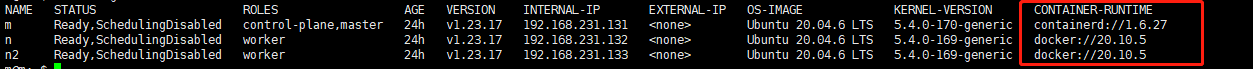

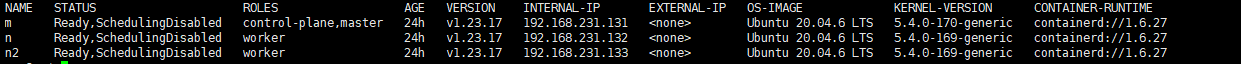

1 2 3 # 查看 container-runtime # 最后一列如果是containerd不要迁移,是docker则需要迁移

1 2 3 4 5 6 7 8 9 10 11 12 # 所有节点的操作都一样,master,node01,node02... # 容器迁移 dockers > containerd # 保护主节点 (master为当前主节点名称) # 停止服务

1 2 3 4 5 6 7 8 9 # Docker 默认安装使用了 containerd 作为后端的容器运行,不需要额外安装containerd # 生成默认containerd配置 # 查看k8s 运行参数 # 抄出类似的内容 registry.aliyuncs.com/google_containers/pause:3.2

1 2 3 4 5 6 7 8 9 10 # 修改默认配置 # 找到disabled_plugins = [],将其注释 # 找到sandbox_image,将其值修改为"registry.aliyuncs.com/google_containers/pause:3.2" # 找到 [plugins."io.containerd.grpc.v1.cri" .registry.mirrors],在其下添加 # [plugins."io.containerd.grpc.v1.cri" .registry.mirrors."docker.io" ] # endpoint = ["https://bqr1dr1n.mirror.aliyuncs.com" ] # [plugins."io.containerd.grpc.v1.cri" .registry.mirrors."k8s.gcr.io" ] # endpoint = ["https://registry.aliyuncs.com/k8sxio" ]

1 2 3 4 5 6 7 8 # 修改k8s 运行参数,由docker转为containerd # 添加如下参数 # --container-runtime=remote # --container-runtime-endpoint=unix:///run/containerd/containerd.sock # 删除 # --network-plugin=cni

1 2 3 4 5 6 # 修改节点配置 # kubeadm.alpha.kubernetes.io/cri-socket: /var/run/dockershim.sock # 改为 # kubeadm.alpha.kubernetes.io/cri-socket: /var/run/containerd/containerd.sock

1 2 3 4 5 # 重启服务

示例toml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 imports = []oom_score = 0 plugin_dir = "" required_plugins = []root = "/var/lib/containerd" state = "/run/containerd" temp = "" version = 2 [cgroup] path = "" [debug] address = "" format = "" gid = 0 level = "" uid = 0 [grpc] address = "/run/containerd/containerd.sock" gid = 0 max_recv_message_size = 16777216 max_send_message_size = 16777216 tcp_address = "" tcp_tls_ca = "" tcp_tls_cert = "" tcp_tls_key = "" uid = 0 [metrics] address = "" grpc_histogram = false [plugins] [plugins."io.containerd.gc.v1.scheduler"] deletion_threshold = 0 mutation_threshold = 100 pause_threshold = 0.02 schedule_delay = "0s" startup_delay = "100ms" [plugins."io.containerd.grpc.v1.cri"] device_ownership_from_security_context = false disable_apparmor = false disable_cgroup = false disable_hugetlb_controller = true disable_proc_mount = false disable_tcp_service = true enable_selinux = false enable_tls_streaming = false enable_unprivileged_icmp = false enable_unprivileged_ports = false ignore_image_defined_volumes = false max_concurrent_downloads = 3 max_container_log_line_size = 16384 netns_mounts_under_state_dir = false restrict_oom_score_adj = false sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.2" selinux_category_range = 1024 stats_collect_period = 10 stream_idle_timeout = "4h0m0s" stream_server_address = "127.0.0.1" stream_server_port = "0" systemd_cgroup = false tolerate_missing_hugetlb_controller = true unset_seccomp_profile = "" [plugins."io.containerd.grpc.v1.cri".cni] bin_dir = "/opt/cni/bin" conf_dir = "/etc/cni/net.d" conf_template = "" ip_pref = "" max_conf_num = 1 [plugins."io.containerd.grpc.v1.cri".containerd] default_runtime_name = "runc" disable_snapshot_annotations = true discard_unpacked_layers = false ignore_rdt_not_enabled_errors = false no_pivot = false snapshotter = "overlayfs" [plugins."io.containerd.grpc.v1.cri".containerd.default_runtime] base_runtime_spec = "" cni_conf_dir = "" cni_max_conf_num = 0 container_annotations = []pod_annotations = []privileged_without_host_devices = false runtime_engine = "" runtime_path = "" runtime_root = "" runtime_type = "" [plugins."io.containerd.grpc.v1.cri".containerd.default_runtime.options] [plugins."io.containerd.grpc.v1.cri".containerd.runtimes] [plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc] base_runtime_spec = "" cni_conf_dir = "" cni_max_conf_num = 0 container_annotations = []pod_annotations = []privileged_without_host_devices = false runtime_engine = "" runtime_path = "" runtime_root = "" runtime_type = "io.containerd.runc.v2" [plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options] BinaryName = "" CriuImagePath = "" CriuPath = "" CriuWorkPath = "" IoGid = 0 IoUid = 0 NoNewKeyring = false NoPivotRoot = false Root = "" ShimCgroup = "" SystemdCgroup = false [plugins."io.containerd.grpc.v1.cri".containerd.untrusted_workload_runtime] base_runtime_spec = "" cni_conf_dir = "" cni_max_conf_num = 0 container_annotations = []pod_annotations = []privileged_without_host_devices = false runtime_engine = "" runtime_path = "" runtime_root = "" runtime_type = "" [plugins."io.containerd.grpc.v1.cri".containerd.untrusted_workload_runtime.options] [plugins."io.containerd.grpc.v1.cri".image_decryption] key_model = "node" [plugins."io.containerd.grpc.v1.cri".registry] config_path = "" [plugins."io.containerd.grpc.v1.cri".registry.auths] [plugins."io.containerd.grpc.v1.cri".registry.configs] [plugins."io.containerd.grpc.v1.cri".registry.headers] [plugins."io.containerd.grpc.v1.cri".registry.mirrors] [plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"] endpoint = ["https://bqr1dr1n.mirror.aliyuncs.com" ] [plugins."io.containerd.grpc.v1.cri".registry.mirrors."k8s.gcr.io"] endpoint = ["https://registry.aliyuncs.com/k8sxio" ] [plugins."io.containerd.grpc.v1.cri".x509_key_pair_streaming] tls_cert_file = "" tls_key_file = "" [plugins."io.containerd.internal.v1.opt"] path = "/opt/containerd" [plugins."io.containerd.internal.v1.restart"] interval = "10s" [plugins."io.containerd.internal.v1.tracing"] sampling_ratio = 1.0 service_name = "containerd" [plugins."io.containerd.metadata.v1.bolt"] content_sharing_policy = "shared" [plugins."io.containerd.monitor.v1.cgroups"] no_prometheus = false [plugins."io.containerd.runtime.v1.linux"] no_shim = false runtime = "runc" runtime_root = "" shim = "containerd-shim" shim_debug = false [plugins."io.containerd.runtime.v2.task"] platforms = ["linux/amd64" ]sched_core = false [plugins."io.containerd.service.v1.diff-service"] default = ["walking" ][plugins."io.containerd.service.v1.tasks-service"] rdt_config_file = "" [plugins."io.containerd.snapshotter.v1.aufs"] root_path = "" [plugins."io.containerd.snapshotter.v1.btrfs"] root_path = "" [plugins."io.containerd.snapshotter.v1.devmapper"] async_remove = false base_image_size = "" discard_blocks = false fs_options = "" fs_type = "" pool_name = "" root_path = "" [plugins."io.containerd.snapshotter.v1.native"] root_path = "" [plugins."io.containerd.snapshotter.v1.overlayfs"] mount_options = []root_path = "" sync_remove = false upperdir_label = false [plugins."io.containerd.snapshotter.v1.zfs"] root_path = "" [plugins."io.containerd.tracing.processor.v1.otlp"] endpoint = "" insecure = false protocol = "" [proxy_plugins] [stream_processors] [stream_processors."io.containerd.ocicrypt.decoder.v1.tar"] accepts = ["application/vnd.oci.image.layer.v1.tar+encrypted" ]args = ["--decryption-keys-path" , "/etc/containerd/ocicrypt/keys" ]env = ["OCICRYPT_KEYPROVIDER_CONFIG=/etc/containerd/ocicrypt/ocicrypt_keyprovider.conf" ]path = "ctd-decoder" returns = "application/vnd.oci.image.layer.v1.tar" [stream_processors."io.containerd.ocicrypt.decoder.v1.tar.gzip"] accepts = ["application/vnd.oci.image.layer.v1.tar+gzip+encrypted" ]args = ["--decryption-keys-path" , "/etc/containerd/ocicrypt/keys" ]env = ["OCICRYPT_KEYPROVIDER_CONFIG=/etc/containerd/ocicrypt/ocicrypt_keyprovider.conf" ]path = "ctd-decoder" returns = "application/vnd.oci.image.layer.v1.tar+gzip" [timeouts] "io.containerd.timeout.bolt.open" = "0s" "io.containerd.timeout.shim.cleanup" = "5s" "io.containerd.timeout.shim.load" = "5s" "io.containerd.timeout.shim.shutdown" = "3s" "io.containerd.timeout.task.state" = "2s" [ttrpc] address = "" gid = 0 uid = 0

迁移结果

containerd1

containerd3

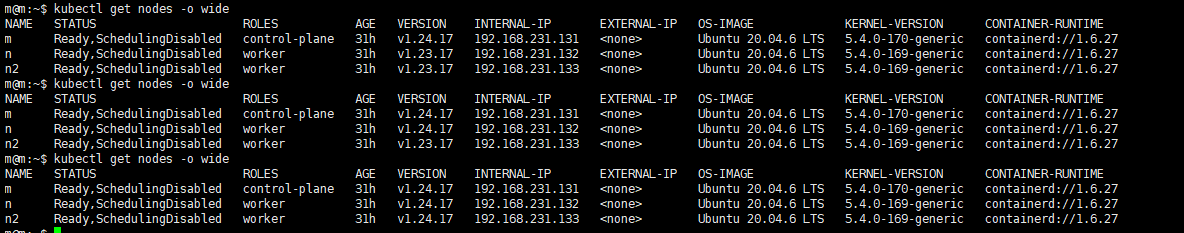

主节点升级

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 # 以1.23.17 > 1.24.17为例! # 保护主节点 (master为当前主节点名称) # 安装新版本kubeadm # 查看可用升级计划 # 使用升级命令 # 如果控制台提示stable版本的当前kubeadm版本不同,apply版本以kubeadm为准 # 升级kubelet和kubectl # 重启服务 # 取消保护主节点 # 查看节点情况和版本

子节点升级

在主节点升级完成后

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 # 安装新版本kubeadm # 保护子节点 # 升级 node # 升级kubelet和kubectl # 重启服务 # 取消保护

查看升级情况

1 2 # 查看节点情况和版本

升级结果

containerd

新版本配置